Projects

A selection of projects demonstrating my work across robotics, embedded systems, and software

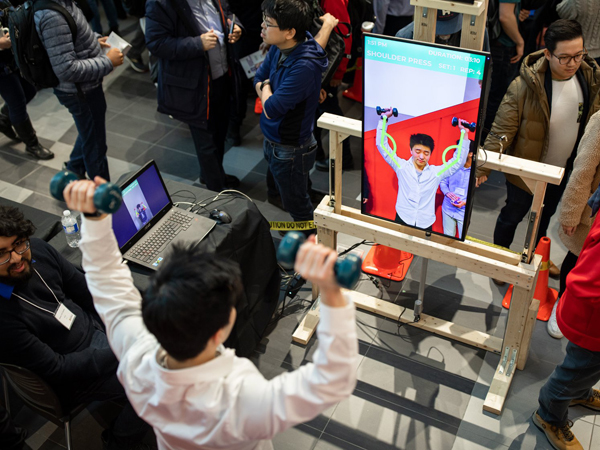

A smart mirror that watches your workout and tells you when your form breaks down — in real time.

Built as a capstone project, Spoteria is a smart mirror that monitors exercise form in real time and delivers colour-coded visual feedback across x, y, and z planes. A Microsoft Kinect captures joint movement data, which was used to train a machine learning model in Turi to distinguish correct form from incorrect. An actuator adjusts the screen height automatically so the mirror works for seated and standing exercises alike. Core software was written in C# and C, with Arduino handling actuator control.

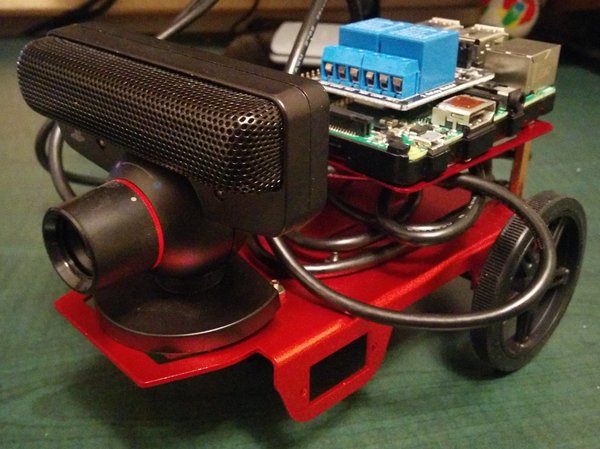

A Raspberry Pi robot that navigates obstacle courses autonomously using computer vision.

RaspBot is a semi-autonomous ground robot built around a Raspberry Pi. Using OpenCV for real-time image processing and a suite of sensors for environmental awareness, the robot navigates miniature road-style courses without human input. The navigation logic is implemented in C++ and Python, with computer vision driving lane detection and obstacle avoidance decisions.

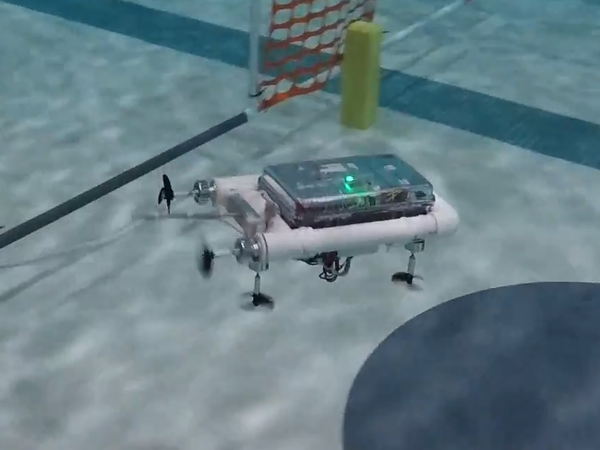

A semi-autonomous underwater vehicle with a PD controller for stable depth and heading.

Cyclops is an Arduino-powered ROV designed for autonomous underwater navigation. A 9-DoF IMU feeds sensor fusion algorithms to estimate yaw, pitch, and roll, while a pressure sensor handles depth and light sensors provide environmental context. A PD controller coordinates the four-motor drivetrain to hold heading and depth accurately. The project went through rapid hardware and software iteration — every prototype test informed the next mechanical or firmware revision.

Fly a Crazyflie quadcopter with nothing but your hands, using LEAP Motion gesture tracking.

An extension of the Maestro project, Free Fly takes gesture-based control into three dimensions. A LEAP Motion controller tracks hand position and orientation in 3D space, mapping movements to the yaw, roll, and pitch of a Crazyflie quadcopter. The interface is designed to feel natural — tilting your hand banks the drone, raising it climbs. Built in Python using the LEAP Motion SDK and Crazyflie Python API.

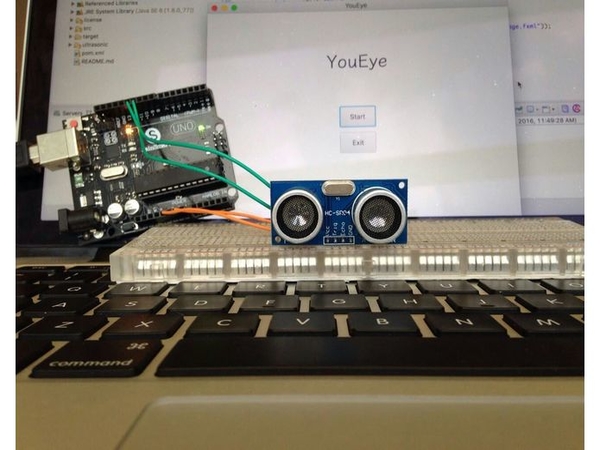

A wearable assistant that narrates your surroundings in real time for visually impaired users.

Built at Hack the North, YouEye is a vision-to-speech assistant for people who are visually impaired. A forward-facing camera captures frames continuously, which are sent to Google Cloud Vision API for object identification. The system measures estimated distance and escalates its spoken feedback accordingly — quiet confirmation when the path is clear, a caution warning as objects approach, and a direct alert when they are close. The front end was built with HTML, CSS, and JavaScript.

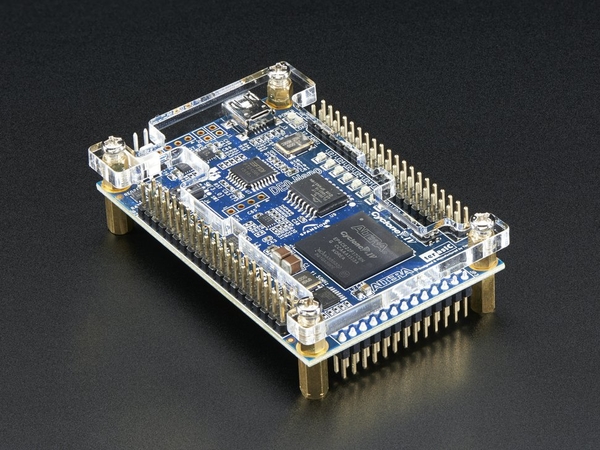

A fully functional audio player built on an FPGA — custom drivers, interrupts, and all.

Programmed a DE1-SoC FPGA board from scratch to function as a .wav media player. This meant writing custom device drivers for every peripheral, handling SD card I/O at a low level, implementing audio processing pipelines, and managing hardware interrupts for playback controls. The Altera toolchain (Quartus, QSYS, NIOS II) was used throughout. Every layer of the stack — from register-level debugging to synchronisation between peripherals — was handled in C.

A fuel-cell powered miniature car that navigates a maze using only sensor input — no shortcuts.

Programmed an MSP430 microcontroller to drive a miniature hydrogen fuel-cell car through a maze. Ultrasonic, touch, and light intensity sensors feed a decision algorithm that handles turning, reversing, and deadend recovery. The tight energy budget of the fuel cell made efficiency a hard constraint — every unnecessary manoeuvre cost precious run time, so the navigation logic was heavily optimised for minimal movement.

Gesture-driven robot control via LEAP Motion and ROS — 1st place at Deloitte Tech Exchange.

Developed at the Deloitte Tech Exchange competition, Maestro is a gesture interface that maps hand movements in 3D space to robot navigation commands. Using a LEAP Motion controller and the LEAP SDK, hand position and orientation are translated into speed, rotation direction, and linear movement for a ROS-controlled robot. The team took first place overall out of all competing universities.

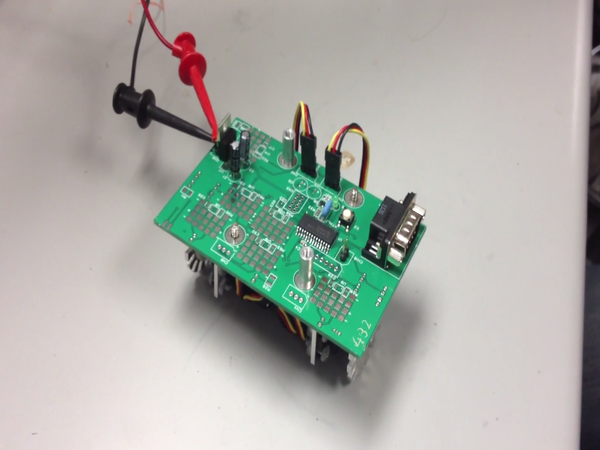

A line-following robot built entirely from first principles — custom PCB, sensors, and all signal conditioning.

Circuit Bot was built from the ground up on a custom PCB — no off-the-shelf sensor modules. Hall-effect, thermistor, current, light intensity, and optical encoder sensors were each designed from first principles and verified with an oscilloscope. Op-amp filter circuits were built to clean up noisy signals before they reached the microcontroller. The navigation firmware, written in C, uses the optical encoders and light sensors to follow a line course with consistent accuracy.